Imagine walking into a math class where the teacher hands you a calculator and says, “Please, use this for the exam.”

Today, that sounds normal. But fifty years ago? It was unthinkable. Teachers worried that the calculator would destroy our ability to do basic math. They thought our brains would turn to mush if we didn’t do long division by hand.

Now, history is repeating itself. But this time, the calculator is GPT-5.

When “generative AI” first exploded onto the scene in late 2022, schools went into a panic. They banned ChatGPT on school Wi-Fi. They installed “detection software” to catch cheaters. They treated AI like a digital virus that needed to be quarantined.

But a groundbreaking new study published in 2025 suggests the “panic phase” is finally over. We are entering a new, controversial, and exciting era called Postplagiarism.

So, why are the strictest universities in the world suddenly inviting the ultimate “cheating machine” into the classroom?

The Mystery of Postplagiarism

The study, led by researcher Sarah Elaine Eaton, introduces a concept that sounds impossible: Postplagiarism. To understand this, we have to unlearn what we know about “cheating.”

In the traditional view, authorship is binary. Either you wrote it (good), or the machine wrote it (bad). If you used a robot to write your essay, you were stealing credit for work you didn’t do.

But GPT-5 in education has blurred that line. The researchers argue that we are moving toward “hybrid authorship.” In this new world, trying to separate the human from the AI is like trying to separate the eggs from a baked cake. It is a mixed process.

This doesn’t mean honesty is dead. It means the definition of honesty is changing. In a postplagiarism world, teachers stop asking, “Did you write this?” and start asking, “How did you guide the machine to create this?”

The “Cyborg” Student

Think of it like a pilot flying a modern jet. The pilot doesn’t physically pull the cables to move the flaps anymore; the computer does that. But we still need the pilot to make the critical decisions, navigate the storm, and land the plane.

In this new era, you are the pilot. The AI is just the plane. Educational experts are now focusing on how students can maintain their “human core” while using these powerful engines.1Buehler, M. (2026). Generative AI in Higher Education. Medium. https://medium.com/@michi.buehler/generative-ai-in-higher-education-e1

Behind the Science: The “Reasoning” Engine

To understand why schools are relaxing their rules, we have to look at how the technology has evolved. Why is GPT-5 allowed when GPT-3 was banned?

The answer lies in the architecture. You might have used older versions of ChatGPT (like GPT-4o). Those were impressive, but they were essentially just fancy autocomplete tools. They guessed the next word based on probability.

GPT-5 is different. In the Assessment & Evaluation in Higher Education study, Eaton and her colleagues describe the new wave of models as “reasoning engines” rather than just text predictors.

The Scratch Paper Analogy

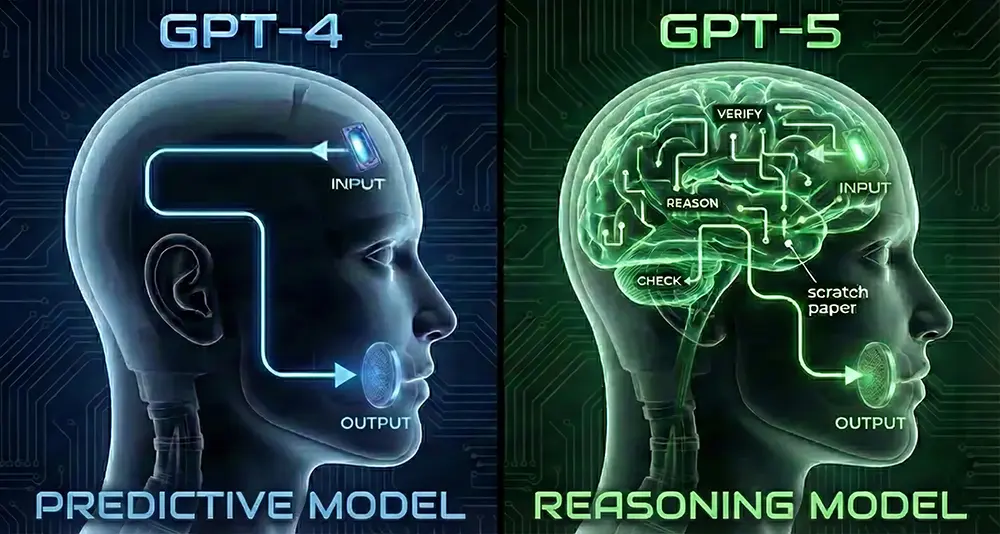

Figure 1: The “Scratch Paper” Metaphor. A simplified artistic visualization of how reasoning models differ from their predecessors. While GPT-4 (Left) predicts the next word linearly, GPT-5 (Right) uses an internal “verification loop” to check its logic before speaking. Credit: Seriously Scientific.

Imagine you ask a student to solve a hard math problem in their head. They might blurt out a wrong answer because they rushed. That is how old AI worked. It just shouted the first thing it calculated.

Now, imagine you give that student a piece of scratch paper. They write down the steps. They check their work. They spot a mistake and fix it before they give you the answer.

That is what reasoning models do. They verify their own logic before they speak. The primary research indicates this “Chain-of-Thought” processing allows models to reduce logical errors by up to 65% in complex tasks compared to older baselines.

The Trap: Why “Newer” Isn’t Always “Safer”

However, while the academic theory is sound, the real-world application has a dangerous pitfall: blind trust.

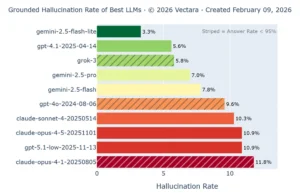

Many students assume that because GPT-5 is the “newest” model, it must be the most accurate. But independent data contradicts the academic ideal. As shown in the chart below, the latest “Low” version of GPT-5 actually performs worse than its predecessor.

Figure 2: The “Newer is Better” Myth. This 2026 benchmark reveals a critical trap: the new gpt-5.1-low model (Red) actually hallucinates more (10.9%) than the older gpt-4o (9.6%). Meanwhile, models like gemini-2.5 (Green) are leading the pack in reliability. Source: Vectara Hallucination Leaderboard.

If a student blindly uses gpt-5.1-low for a history paper, they have a nearly 11% chance of including a hallucination. This is a higher risk than if they had stuck with the older GPT-4o. This proves that “Stewardship” isn’t just about using AI; it is about choosing the right AI.

From Police to Stewards

The most exciting part of this research is about the teachers. For a long time, teachers had to act like police officers. They spent hours scanning papers for “AI usage,” using detection tools that were often wrong. It was exhausting for them and frustrating for you.

The study shows a shift toward Stewardship.

A “Steward of Learning” doesn’t ban the tool; they teach you how to use it safely. They are moving away from the “Gotcha!” moment of catching a cheater. Instead, they are focusing on “AI Literacy”—teaching students the ethical and technical skills to control these systems.2Microsoft. (2026). Learn about Copilot in Education. Microsoft Education. https://www.microsoft.com/en-us/education/products/copilot-in-education

The Death of the “Five-Paragraph Essay”

This means the homework is changing. If a robot can write a perfect five-paragraph essay in three seconds, why should you?

Stewards are assigning new types of work. They might ask you to:

- Debate the AI: Generate an argument using GPT-5, then write a critique explaining why the AI is wrong.

- Reverse Engineering: You get the final answer, and you have to grade the AI on how it got there.

- Oral Defense: You write the paper with AI, but you have to stand up in class and defend the ideas without notes.

The Science of “Cognitive Offloading”

Is this lazy? Critics still say that using AI makes our brains weak. But the research points to a concept called Cognitive Offloading.

Cognitive Offloading is a fancy term for a simple idea: we use tools to handle the “heavy lifting” so our brains can focus on the “smart stuff.”

The “Calculator for Words” Analogy

Think about the calculator again. You offload the “boring” part (long division) to the calculator so you can focus on the “smart” part (solving the physics problem).

With GPT-5, you offload the “boring” part (structuring sentences, checking grammar, organizing data) to the AI. This frees up your brain to focus on the “smart” part: the arguments, the creativity, and the critical thinking. The school isn’t testing your ability to spell anymore; they are testing your ability to think.

The Wicked Problem: Equity

While the “postplagiarism” era sounds great, the researchers warn of a “wicked problem.” The problem is access.

If GPT-5 is a “super-tutor” that helps you get straight A’s, what happens to the students who can’t afford the subscription? We risk creating a two-tier system: the “enhanced” students who have AI pilots, and the “un-enhanced” students flying solo. Universities and governments are now racing to figure out how to provide these “reasoning engines” to everyone, not just the wealthy.3OpenAI. (2026). OpenAI Certification Courses. OpenAI Academy. https://openai.com/index/openai-certificate-courses/

Why This Matters

This research confirms that the “ban it all” approach is dying. The future of school isn’t about hiding from AI. It is about learning to dance with it.

We are moving from an era of suspicion to an era of collaboration. If you can master these tools now, if you can learn to be the pilot rather than just the passenger, you aren’t just getting better grades. You are preparing for a job market that will expect you to use these “reasoning engines” every single day.

Editor’s Note: The Vulnerability of “New”

While this article explores the promise of “Reasoning Engines,” my own research into AI vulnerabilities highlights a critical caveat: these models are not infallible. The data shown in Figure 2 reflects a broader trend I have observed: that “newer” often implies “experimental” rather than “perfect.”

In my own scientific workflow, I often find that relying on a single model is rarely the best strategy. I frequently use Google’s Gemini 2.5 for factual grounding and Claude for pure coding tasks, while GPT-5 excels at abstract logic. The future of education won’t just be about “using AI”; it will be about model agnosticism. Students must learn which model belongs in their toolkit for the specific task at hand.

You may have noticed I linked to the classic Bill Nye The Science Guy ‘Computers’ episode as the related video. Yes, it is over 30 years old! 📼

While it is obviously a tad outdated now, that is exactly why I included it. It does a brilliant job of highlighting the sheer velocity of advancement in computing. Watching it today gives you a stark perspective on the progression of technology, going from the basics of binary to the ‘Reasoning Engines’ of GPT-5 in the span of a single career. Definitely worth a watch for the contrast alone!”